https://twitter.com/AdrianChen

https://fieldofvision.org/the-moderators

Few people know that Adrian Chen, a young but already influential writer and journalist, started as a (hilarious) 'youtuber' back when Youtube was still a goldmine - if only you would pay enough attention and were able to scavenge for the genius homebrew performer, the genuine freak and the uncanny in general. And that gives a hint of his acute instinct for Internet cultures, from the darkest 4chan humor to opaque government propaganda.

After contributing for years to Wired, Slate, The New York Times and many others, now he writes for The New Yorker. He is also founder of I.R.L. Club, a regular gathering for people from the Internet to meet, well, I.R.L. (that is, “in real life” in geek jargon).

Chen has been writing about the Silk Road, a Darknet market which facilitated online drug purchases; Anonymous; meme cultures; trolls; the Bitcoin and other apparently obscure issues which are actually shaping not only the Internet we know, but also the future of our (networked) society.

In 2012 he got in touch with the admin of a few violent, explicit, and extremely controversial fora on Reddit, a “fountain of racism, porn, gore, misogyny, incest, and exotic abominations yet unnamed”. Chen decided to dox him, that is, expose his real identity. The middle-aged man who, in spite of his hobby, was conducting a quiet life as a programmer in Texas, immediately lost his job and was himself target of any type of harassment. The whole story sparked a huge and complicated debate about trolls and freedom on the Internet.

In 2014 Chen started investigating some strange news about unreported accidents in a chemical plant in Louisiana. Several Twitter users spread the news via dozens of posts and even fake pictures and videos. No accident had ever happened. “It was a highly coordinated disinformation campaign, - Chen wrote - involving dozens of fake accounts that posted hundreds of tweets for hours, targeting a list of figures precisely chosen to generate maximum attention.” Chen managed to trace the twitter incident back to the Internet Research Agency, a shadowy company in St. Petersburg (Russia), a “troll farm” using all type of strategy to ultimately spread disinformation. He actually travelled to Russia to report about it. What he found was a thick smokescreen, until when he actually became the very target of internet trolls in a perfectly orchestrated smear campaign he got caught in inadvertently since the beginning of his trip.

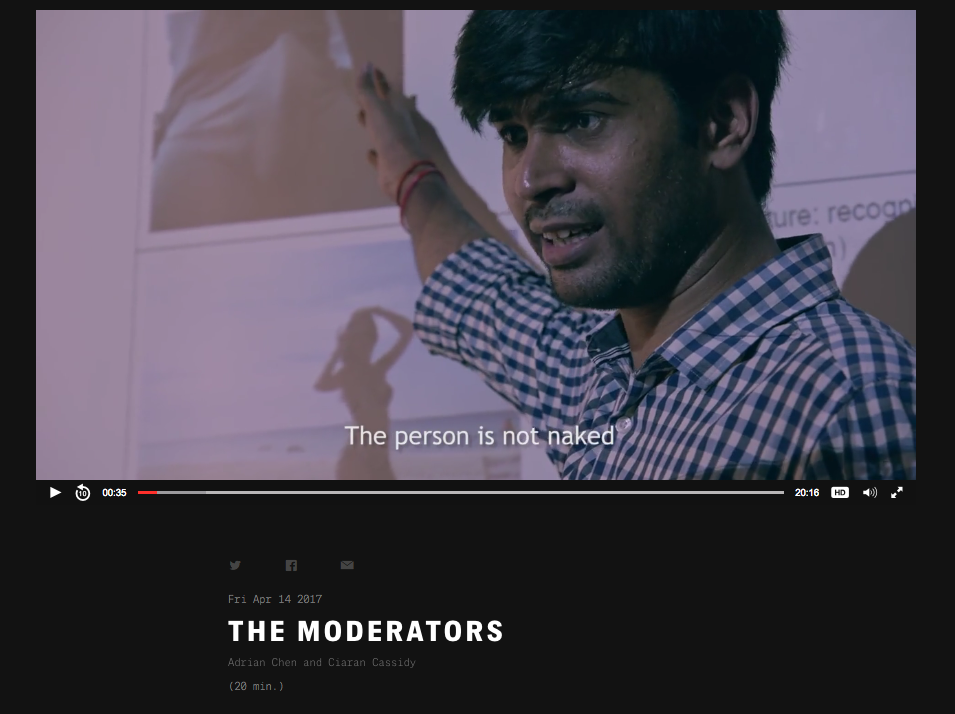

Since 2014 Adrian Chen has been casting light over another hidden part of our daily experience on the Internet: social networks moderation. How come Facebook is not flooded with porn or offensive material or gore, cruel images? Well, in fact it is. Only that an army of outsourced moderators, mostly working from Asian countries, basically censor content, according to guidelines established by the client. Those guidelines are of course confidential and kept private, at least until Chen published them.

The task of those moderators is kept completely in the unknown by the great platforms, probably because it could spark some undesirable debate. “Even technology that seems to exist only as data on a server rests on tedious and potentially dangerous human labor”, says Chen. Besides, the study of hidden social media moderation reveals something else, too: “Leaving the moderation process opaque offers a degree of flexibility and plausible deniability when dealing with politically sensitive issues”.

Moderation has become a hot subject in 2016, as Trump’s presidential campaign began to gain huge traction on social networks (a few months earlier something similar had happened in the UK about the so called #brexit campaign). Both Facebook and Twitter were involved in high-profile controversies over their capacity to filter fake news and hate speech out and were criticized to be the carriers of a new type of propaganda whose effect was multiplied not by government megaphones but by user themselves (or at least that is what it would look like). Facebook’s and Twitter’s defense proved to be a tough task, given their hybrid nature of communities of users and, at the same time, being incredibly powerful sources of information with no need to comply with any of the existing media regulations and professional guidelines.

The New Networked Normal

A European partnership and programme in collaboration with Abandon Normal Devices (UK), Centre de Cultura Contemporània de Barcelona (Catalonia, Spain), The Influencers (Catalonia, Spain), Transmediale (Germany) and STRP (Netherlands).

This project has been funded with support from the European Commission. This communication reflects the views only of the author, and the Commission cannot be held responsible for any use which may be made of the information contained therein.